A/B test basics

A/B tests allow you measure which app changes or messaging strategies are the most successful. Each test compares a control group of users (A) against one or several test groups known as variants (B, C, etc.). The control group will receive a default experience, and the variant groups will receive a different experience or message, so you can determine which is the most effective.

Instead of risking negative feedback by launching untested features, you can test new features or messaging strategies on a smaller portion of your customers, optimize, and then launch in full.

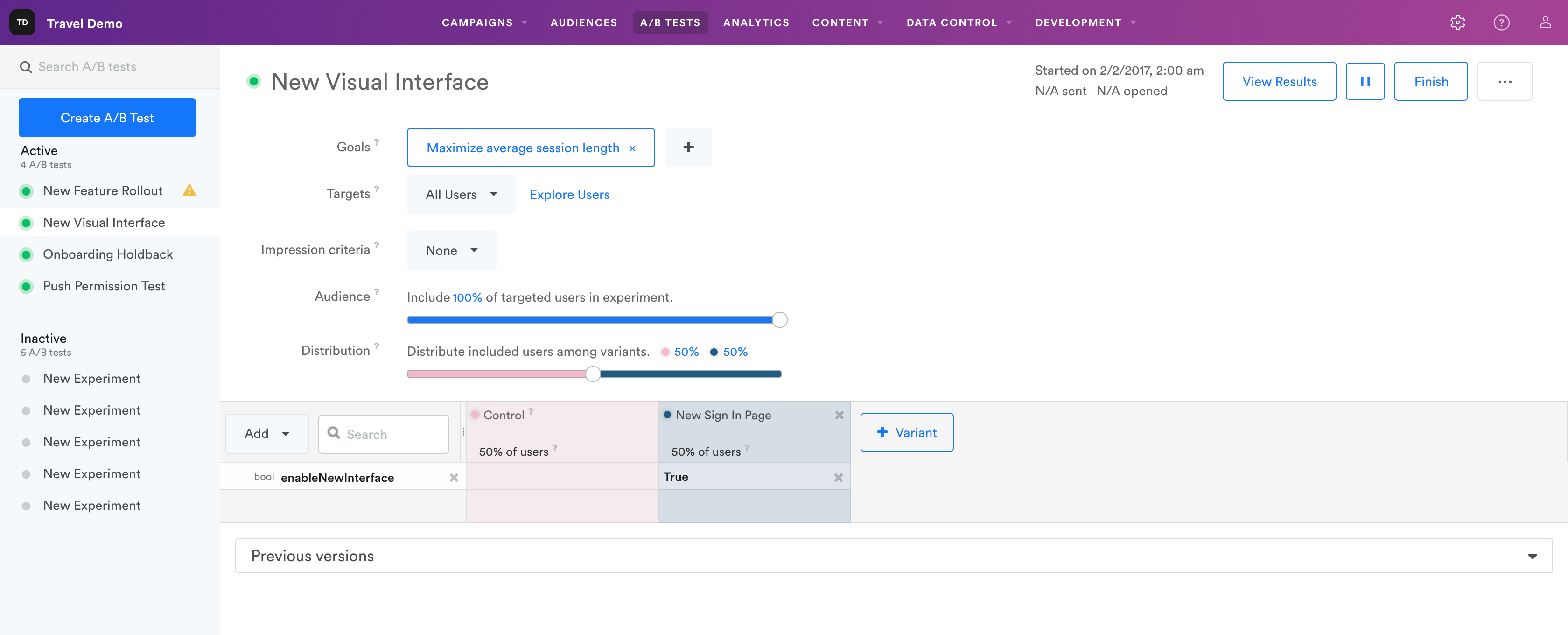

In the A/B Tests dashboard, you can view all of your current and past A/B tests, their status (active or inactive), and the goals and targeting options for each.

What you can A/B test

You can A/B test your app's messages and variables.

Messaging

- Message copy

- Delivery channels and timing

- In-app triggers

- Audience segments

- Lifecycle campaigns

- Permission requests

- Onboarding tutorials

- Cart abandonment campaigns

- Promotions

Variables

- Assets

- Booleans

- Strings

- Colors

- Floats

- Arrays

- Dictionaries

- Custom structured data

Creating a test

To get started, jump to Creating an A/B test.

Seeing test results

You can see the results of your A/B test in the Analytics dashboard. A/B test analytics.

You can view A/B test analytics at any time during or after the test, whether the test is active or paused. Note that it may take two to four hours for A/B test results to show up in Analytics.

Choosing a winning variant

Once you are satisfied with your test results, you can finish your test and roll out the winning variant. Navigate back to your test’s setup page to merge the winning changes to your app or campaign.

For more about A/B tests, read A/B test troubleshooting and FAQ.

Updated 9 months ago