A/B tests FAQ

What is the control variant?

The control variant is what will be used to compare your result against in the A/B test. It is typically the standard experience served to the user, whether it is an experience in the app or a message.

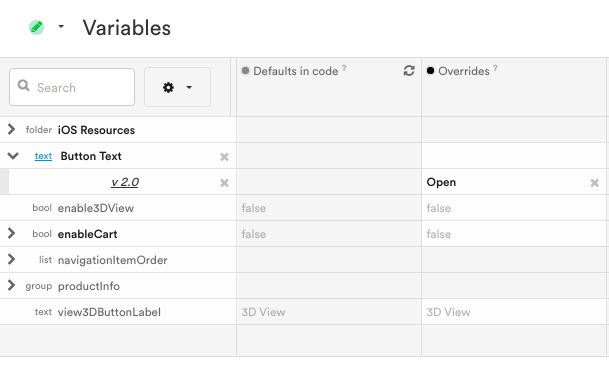

If testing a variable, the control will be the override value on the Variables Dashboard. If no override has been set, it defaults to the default in the code value.

How do I prevent users from seeing an A/B test while I'm setting it up?

As long as you don't publish your A/B test, your users won't see your changes. Only development devices may see changes. Also, you may want to pause any messages you plan on testing.

Can a user enter into two tests at the same time?

If a user fits the targeting for more than one test, then yes, that user could be sorted into multiple tests at once.

Multiple tests for one variableWhen a user qualifies for multiple A/B tests affecting the same variable, Leanplum will show that user the experience for whichever test was published first. If you want to ensure that users see the newer test, do one of the following:

- Make sure the targets for the two tests are mutually exclusive, or

- Finish any other tests affecting the variable before creating a new test

How do I target an A/B test to new users only?

Under Targets, choose Behavioral > First-time users.

By default, the targeting is sticky — i.e., new users who saw the A/B test will remain in the A/B test past their first session. If you want new users to only see the A/B test in their first session, click the magnet icon to turn off sticky targeting.

What happens when a user logs in on two devices and is a part of an A/B test?

See this tutorial for more info: Handle same User login on different devices.

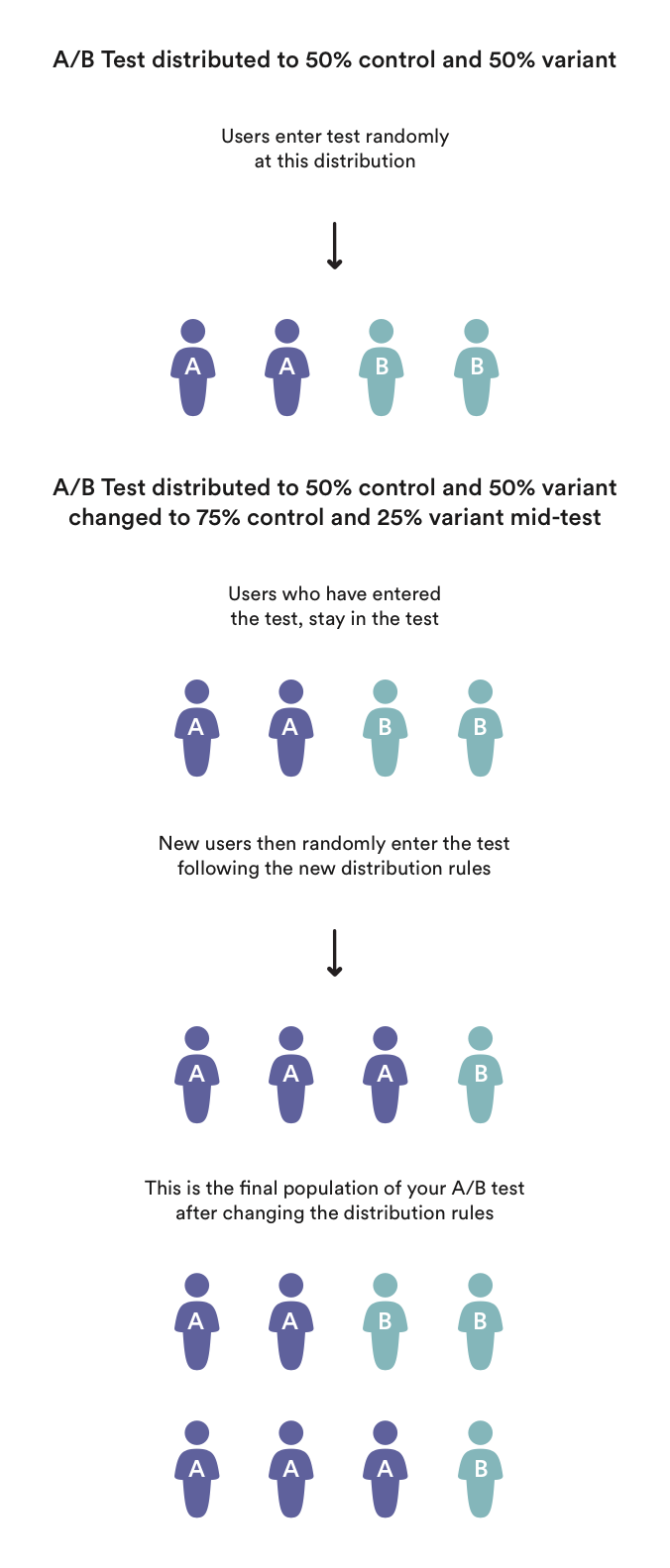

What happens to users in a variant when I change its percentage?

To maintain a consistent user experience, users in a variant will remain in that variant until one of the following happens:

- The A/B test is deleted.

- The variant is deleted.

- The user falls outside of the A/B test's targeting group, and the targeting is not sticky.

If the A/B test is paused and resumed, the user remains in the same variant.

If the distribution is changed mid-experiment:

- Enrolled users will stay in the same variant/control that they were originally assigned to.

- Only new users will come into the experiment at the new distribution.

In this example, the original distribution is 50/50, so half of the new users that come in (2) will go into the control and half will go into the variant.

On day 2, when we adjust the distribution to 75/25. Only the new users that come in will follow the new distribution rules. Leanplum will NOT attempt to compensate for the higher percentage of control by putting more users into the control until the total pool of users is 75/25.

How do I make an A/B test start or end at a particular time?

Add a Behavioral > Session start target to the test.

- To start automatically: Session start is since (start date).

- To end automatically: Session start is until (end date).

- Both: Session start is between (start date) and (end date).

How are user counts calculated for A/B tests?

It's fairly common to see slightly different numbers for a single message between the Message composer and the Analytics dashboard. This is because of how those "counters" are calculated, and how often the data is refreshed.

Why am I seeing an unknown segment in the variant of my A/B test?

Situation:

You have started an a/b test. You later notice that a segment is listed within the variant of the a/b test but you cannot remove it. Why is the segment there and how can I remove it?

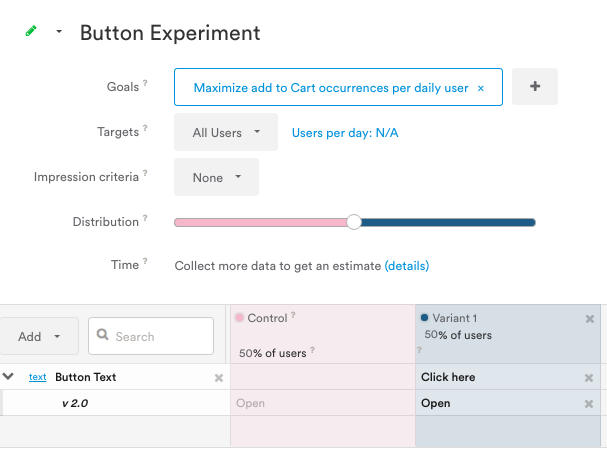

Example:

The unknown segment here is targeting version 2.0 and has a default value of "Open".

Reason:

The segment was added directly to the variable on the variables page. When you add a segment to the variable on the variables page, that segment also gets ported over to the a/b test it's live in so you don't risk changing that specific group's experience.

Result:

The "override" value applied on the variables page to the segment will be ported over the a/b test in the control variant.

If you remove the segment from the variable on the variables page, you'll then be able to remove it from the test as well.

Is it possible for a user to see multiple different variants in a single test?

If your test's target is sticky (magnet button is selected/blue), your users will remain in whatever variant (or control) group they are originally assigned throughout the duration of the test. Even if the user no longer fits the target criteria for your test, they will remain in that variant, which means they will only see the variant experience until you finish the test.

If your test is not sticky, a user will remain in their randomly assigned test group until they no longer fit the target criteria for the test. If that user exits the target group they will exit the test, but if they re-enter the test, they will be sorted in randomly again, as if they are a new user. This means it is possible for them to see multiple variant experiences, especially if their target status changes often.

For example, if the target of a non-sticky test was "Los Angeles users," and one of the users started in LA then went to Chicago, they would no longer be in the test. If that user returned to Los Angeles, they would fit the target criteria once again, and they could be sorted into one of the variants at random.

If I delete an event, what happens to the analytics related to that event? Can I still see these test results?

You may want to go through and delete the events that you no longer use in your app at some point. If you delete an event, note that you won't be able to see that event in analytics or in the results for an A/B test.

If you want to save the results related to these events, you can export statistics for A/B tests and other reports before you delete the event(s). See API export methods for more.

Updated 9 months ago